Introduction to Machine Learning

Table of Contents

Machine Learning Model Development is a branch of artificial intelligence (AI) that enables systems to learn from data and improve their performance without explicit programming. It is widely used in various industries, including healthcare, finance, retail, and autonomous vehicles, to make data-driven decisions.

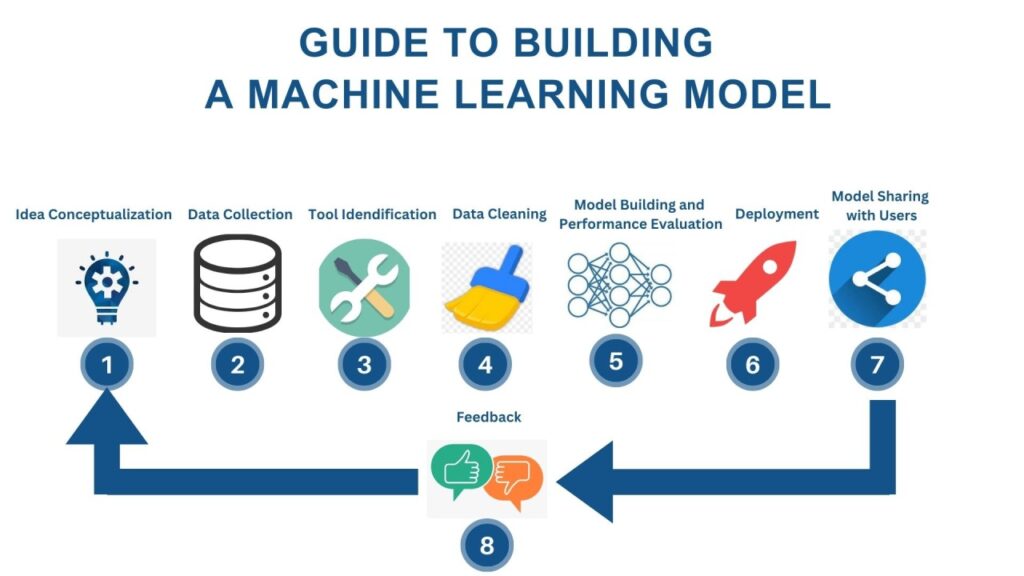

The development of a machine learning model involves multiple steps, from data collection to deployment. Each phase plays a crucial role in ensuring the model’s accuracy, efficiency, and reliability.

Step 1: Problem Definition

Before developing an ML model, it is essential to define the problem clearly. The goal is to understand what the model should predict or classify. Common machine learning tasks include:

Classification: Assigning categories (e.g., spam detection). – Regression: Predicting continuous values (e.g., stock prices). – Clustering: Grouping similar data points (e.g., customer segmentation). – Reinforcement Learning: Learning through rewards and penalties (e.g., game-playing AI).

A well-defined problem helps in selecting the right algorithms and evaluation metrics.

Step 2: Data Collection

Data is the foundation of any machine learning model. The quality and quantity of data significantly impact the model’s performance. Data can be collected from:

Public Datasets: Open-source repositories like Kaggle, UCI Machine Learning Repository. – Web Scraping: Extracting data from websites using tools like BeautifulSoup. – APIs: Accessing structured data from services like Twitter or Google Maps. – Internal Databases: Company records, IoT devices, or transaction logs.

Ensuring the data is relevant and representative of the problem is crucial.

Step 3: Data Preprocessing

Raw data is often messy and requires cleaning before use. Key preprocessing steps include:

Handling Missing Values: Imputing or removing incomplete records. – Data Normalization/Standardization: Scaling features to a common range. – Encoding Categorical Variables: Converting text labels into numerical values. – Feature Engineering: Creating new features that improve model performance. – Outlier Detection: Removing or correcting extreme values that distort results.

Proper preprocessing enhances model accuracy and reduces training time.

Step 4: Feature Selection

Not all features in a dataset contribute equally to predictions. Feature selection helps identify the most relevant variables, reducing complexity and improving efficiency. Techniques include:

Correlation Analysis: Removing highly correlated features. – Principal Component Analysis (PCA): Reducing dimensionality. – Recursive Feature Elimination (RFE): Iteratively eliminating weak features.

Selecting the right features prevents overfitting and speeds up training.

Step 5: Model Selection

Choosing the right algorithm depends on the problem type, data size, and desired accuracy. Common ML algorithms include:

Supervised Learning: – Linear Regression (for regression tasks). – Logistic Regression (for binary classification). – Decision Trees (interpretable models). – Random Forest (ensemble method for better accuracy). – Support Vector Machines (SVMs) (effective for high-dimensional data). – Neural Networks (for complex patterns).

Unsupervised Learning: – K-Means Clustering (for grouping similar data). – DBSCAN (density-based clustering).

Reinforcement Learning: – Q-Learning (for decision-making tasks).

Experimentation with different models is necessary to determine the best fit.

Step 6: Training the Model

The selected model is trained using the prepared dataset. The process involves:

Splitting Data: Dividing into training and testing sets (e.g., 70% training, 30% testing). – Cross-Validation: Ensuring robustness by using techniques like k-fold validation. – Hyperparameter Tuning: Optimizing model parameters for better performance using GridSearchCV or RandomizedSearchCV.

Training continues until the model achieves satisfactory accuracy on the validation set.

Step 7: Model Evaluation

Evaluating the model ensures it generalizes well to unseen data. Metrics depend on the problem type:

Classification: – Accuracy, Precision, Recall, F1-Score, ROC-AUC. – Regression: – Mean Absolute Error (MAE), Mean Squared Error (MSE), R² Score. – Clustering: – Silhouette Score, Davies-Bouldin Index.

If performance is poor, revisiting previous steps (e.g., feature engineering or model selection) may be necessary.

Step 8: Model Deployment

Once the model performs well, it is deployed for real-world use. Deployment methods include:

APIs: Integrating the model into web applications using Flask or FastAPI. – Cloud Services: Hosting on platforms like AWS SageMaker or Google AI Platform. – Edge Devices: Running models on IoT devices for real-time predictions.

Monitoring the model post-deployment ensures it adapts to new data and maintains accuracy.

Step 9: Maintenance and Updating

Machine learning models require continuous monitoring and updates due to changing data patterns. Techniques for maintenance include:

A/B Testing: Comparing new models with existing ones. – Retraining: Updating the model with fresh data periodically. – Bias Detection: Ensuring fairness and avoiding discriminatory predictions.

Maintaining the model ensures long-term reliability and performance.

Common Challenges in ML Model Development

1. Data Quality Issues: Incomplete or noisy data leads to poor model performance.

2. Overfitting: When a model performs well on training data but fails on unseen data.

3. Underfitting: When a model is too simple to capture underlying patterns.

4. Computational Costs: Training complex models requires significant resources.

5. Interpretability: Some models (e.g., deep learning) are difficult to explain.

Future Trends in Machine Learning

Automated Machine Learning (AutoML): Simplifying model development with automated tools.

Explainable AI (XAI): Making AI decisions more transparent.

Federated Learning: Training models across decentralized devices while preserving privacy.

Quantum Machine Learning: Leveraging quantum computing for faster computations.

Conclusion

Developing a machine learning model is a structured yet iterative process that involves problem definition, data collection, preprocessing, model selection, training, evaluation, deployment, and maintenance. Each step requires careful consideration to ensure the model’s effectiveness in real-world applications. As machine learning continues to evolve, advancements in automation, interpretability, and efficiency will further enhance its impact across industries. Understanding these fundamentals empowers businesses and individuals to leverage machine learning effectively for data-driven decision-making.